The culture of reviewing, on apps at least, has changed from a traditional sense of assessment to providing support. All the podcasts and YT channels remind listeners and viewers to "give us a rating" because "they help a lot." How do they help? I'm a little fuzzy on that. So, you end up with a number of different groups:

1. Those who feel obligated to rate

2. Those who are fans

3. Those who are not fans

4. The people who don't rate

With most social media that people commit themselves to engaging, fans are going to outnumber non-fans by a large number. So, rating inflation skewing there. How do the obligated rate? And how many are there? Not sure.

My thesis would be that the way ratings work in social media spaces and are asked for — "it would really help if you would rate us"—leads to inflated ratings because 1) fans are more likely to rate and at higher ratings and 2) a not insignificant number of people "5 star" things in an obligatory fashion.

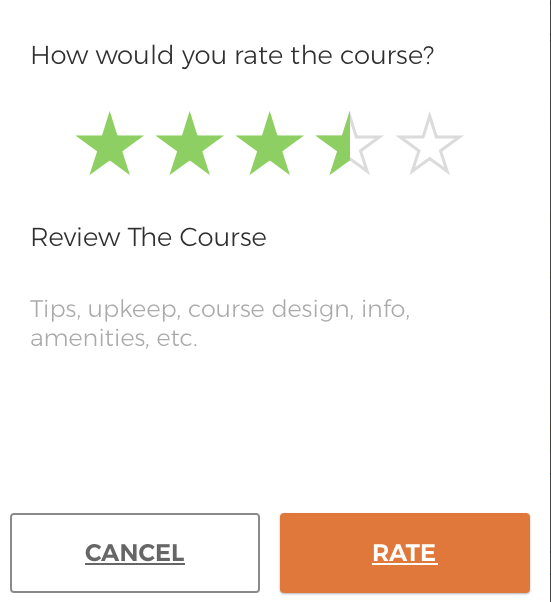

DGCR seems to be largely situated in an older tradition aligned with movies, books, restaurants, etc. The result is fewer reviews but ones largely undertaken purposefully with the intent of providing a valid assessment (valid as defined by the person reviewing) so to contribute to accurate rankings and to aid other players considering the course.

I think the distinction helps explain in part the "how dare you rate my course in alignment with your assessment when I put so much work into it" reaction. That type of assessment is not made "in support." The social media "help us by rating us" idea doesn't much care about the end user (the player looking into playing the course). The ratings are for the sake of securing rankings. I mean, have you looked at UDisc's best rated courses by state. Ewww.